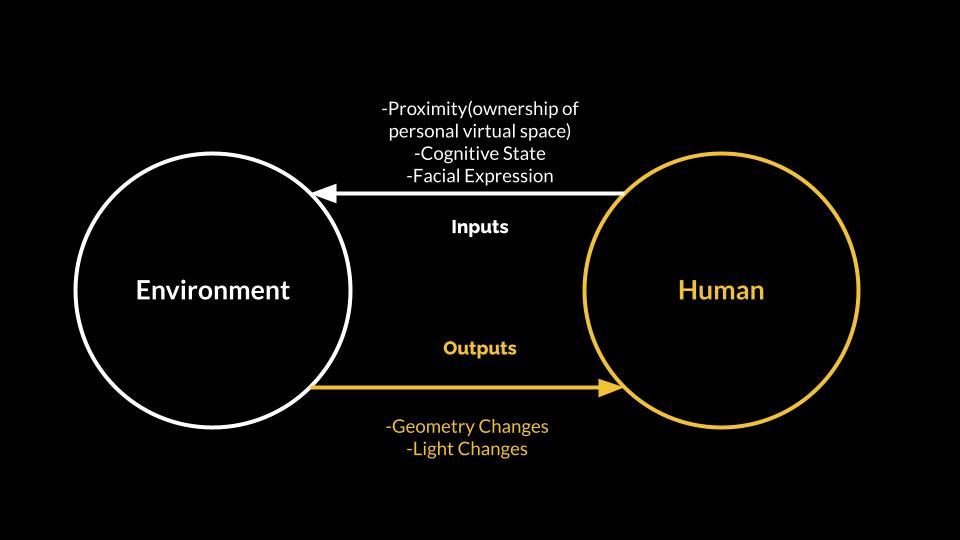

What if our living space changes its geometry and structure in responding to our mental state?

About this project:

Duration: Jan.2021 - present; Collaborator: William Qian; Mentors: Jenny Sabin, Harald, Haraldson

Keywords: Virtual Reality, Adaptive Architecture, Speculative, Brain Computer Interface (BCI), Multi-user Experience

Context: This is an ongoing interdisciplinary project at Cornell University.

We spend most of our daily lives inside a spatial enclosure of various properties. More than 90% time of the day, we act as building dwellers space-users while navigating, perceiving, and interacting with our surrounding built environment. Spatial design strategies employes various techniques such as curvature, material choice, and lighting control can have a strong influence over the "space users"' physiological functions and mental state.

With recent advances in mixed reality technologies, we have been enabled to influence our experience of surroundings. Cedric Price once envisioned an adaptive architectural framework that can mechanically transform and adapt to the user’s need, we believe that mixed reality technologies are already enabling us to change our reality without physically reconstructing it.

Process:

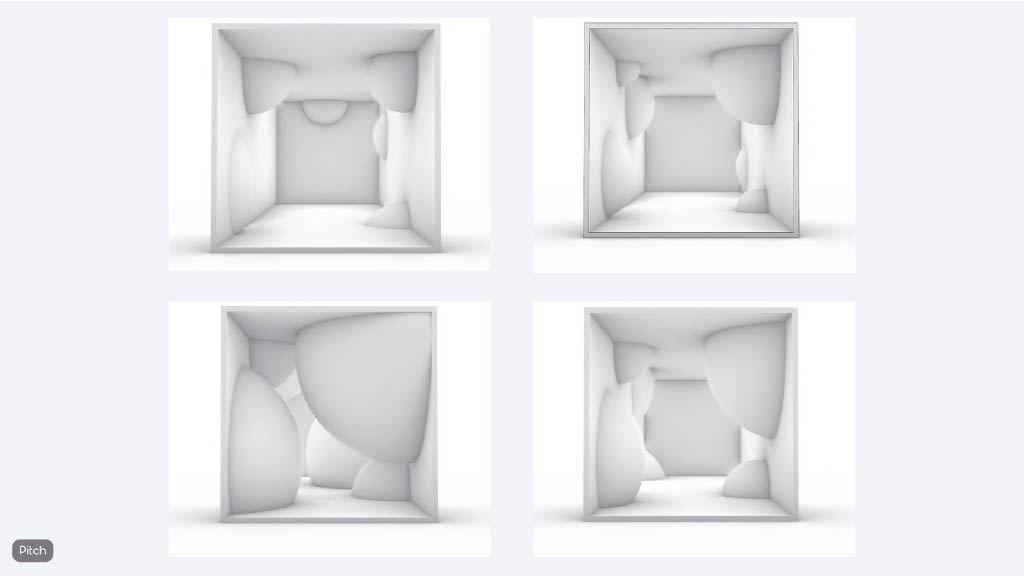

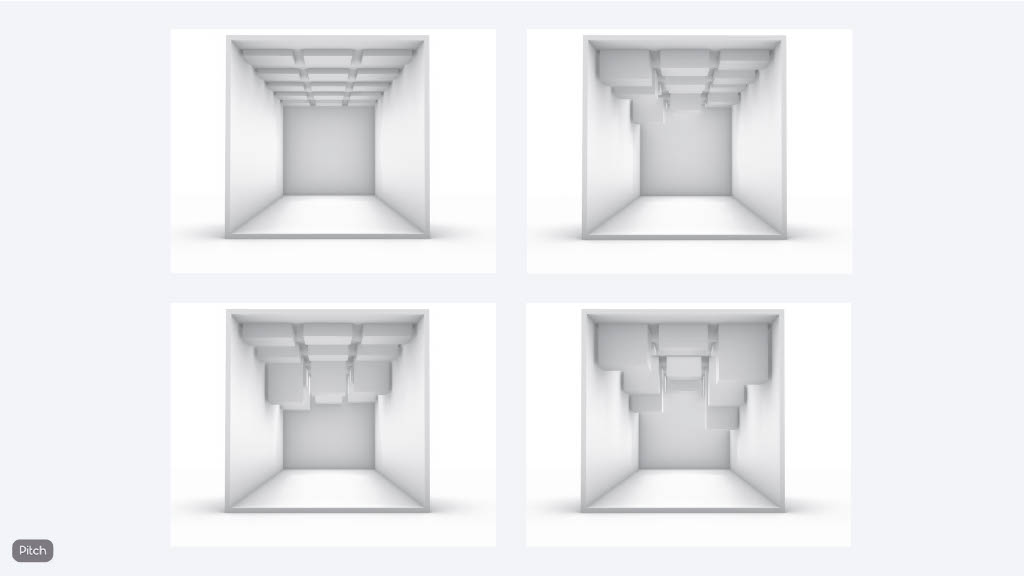

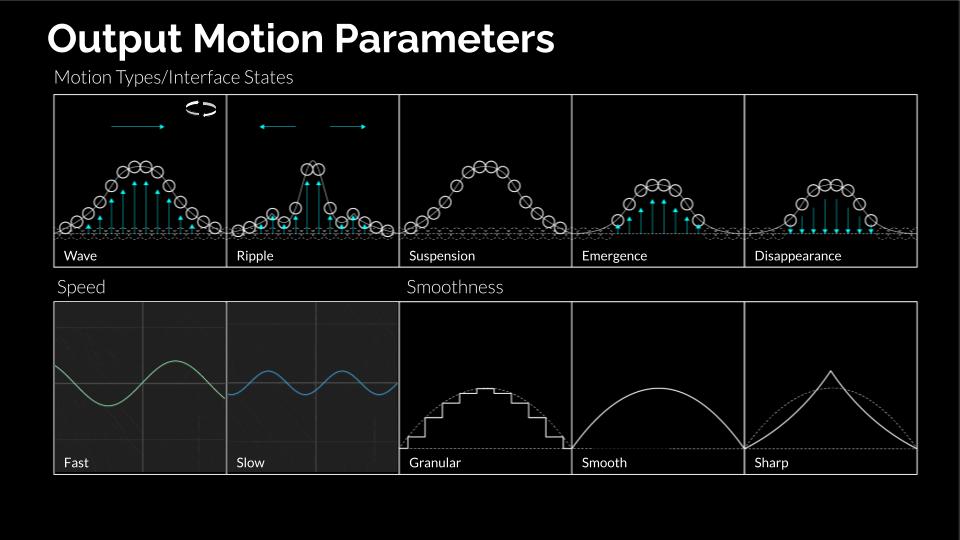

1. Spacializing Emotions

We have been several iterations of design, starting from a bottom-up approach to figure out how humans perceive the shapes and objects' motion in VR. We explore the transformation of geometry on the spectrums of both arousal and valence in emotions. For example, a rounded shape with slow movement echoes a calm and less intense emotion.

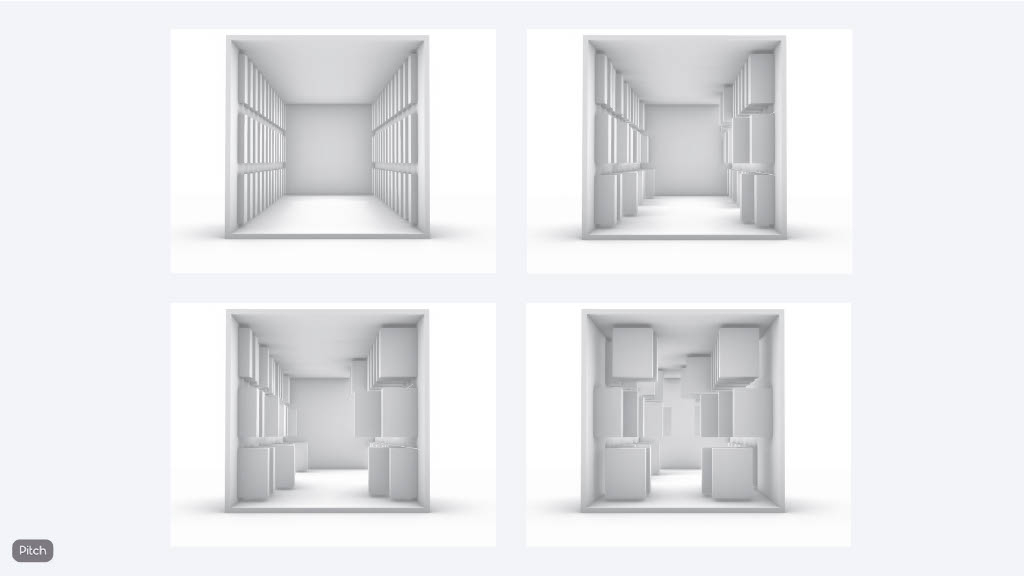

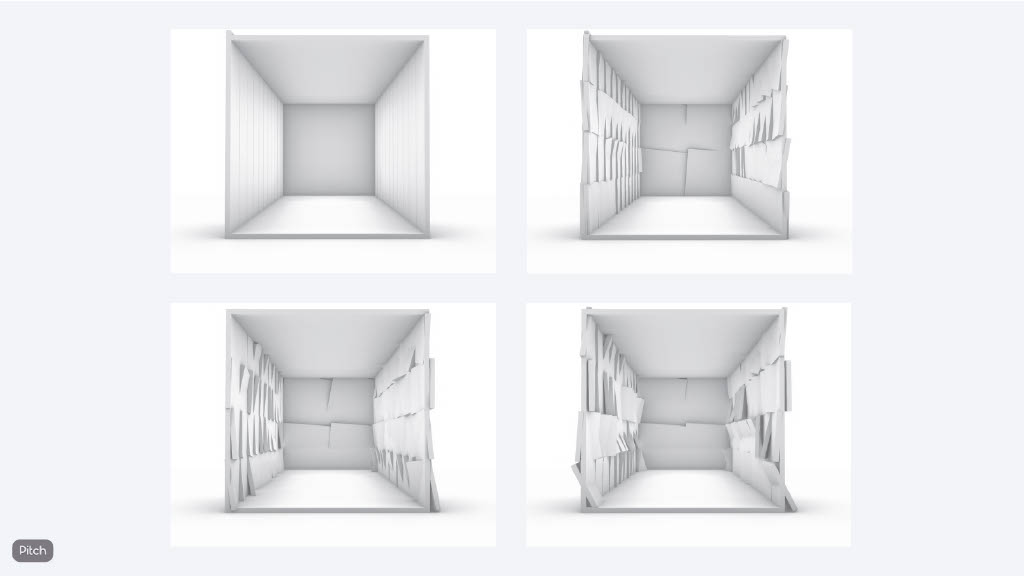

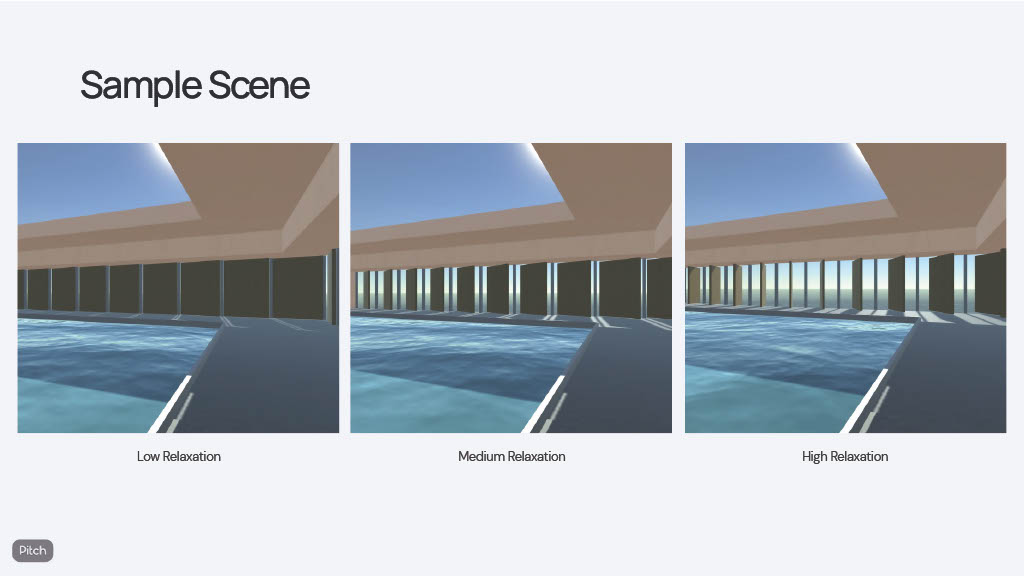

2. Architectural Elements

Window sizes are associated with high post-occupancy satisfaction and lower self-reported depression. Not only windows can bring in natural light, they can also increase the range of spatial visibility and dramatically elevate a sense of spaciousness. In this iteration of the experimental design, we associated the user's relaxation level with degrees of window openness.

3. Current work in progess

These are our most current designs. We are implementing those abstract transformations in VR space.

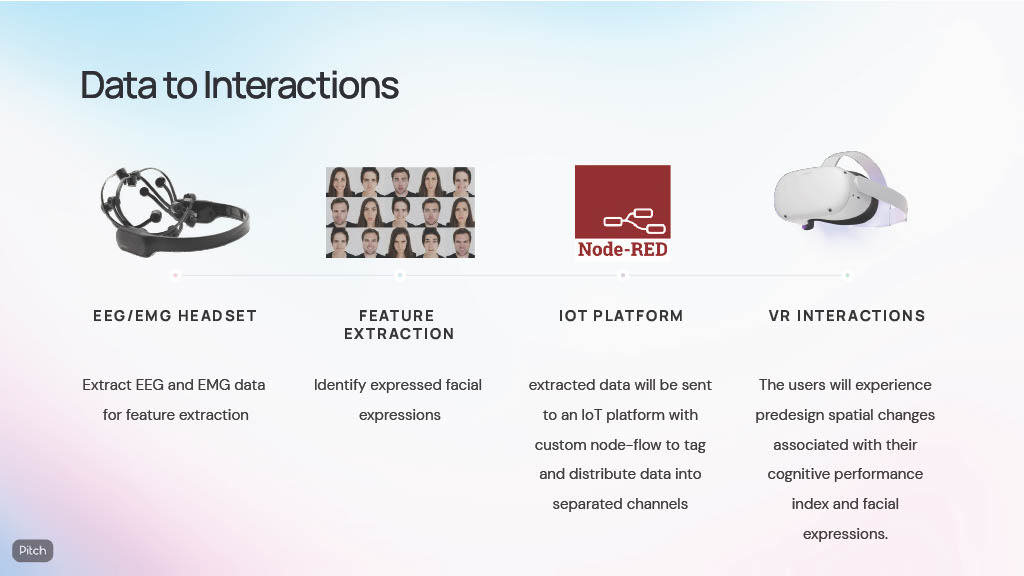

4. Tech Spec

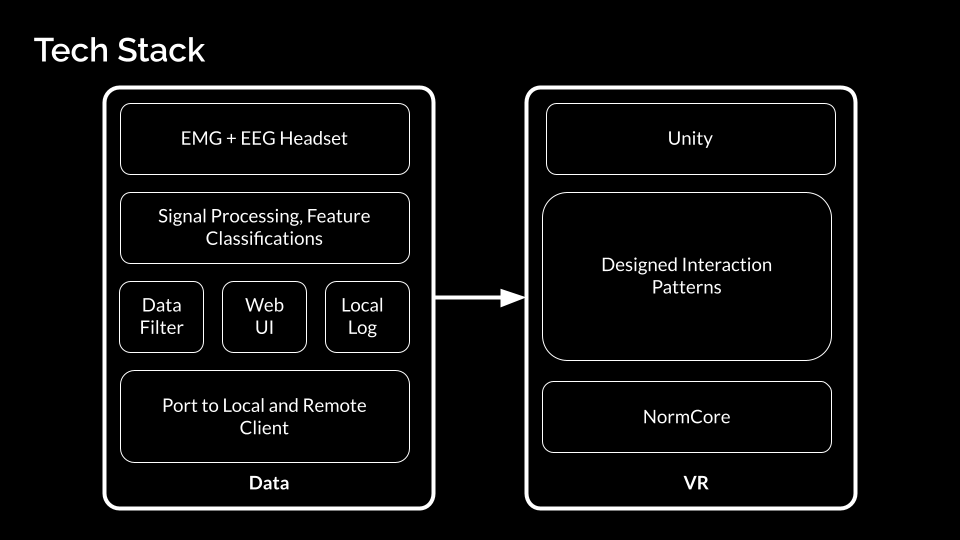

In order to achieve realtime responsive environment in VR from brain signal, we create a structure that process the data from Emotiv BCI and then pass it to Oculus Quest 2. We use Unity XR package for Locomotion and Normcore for real-time multi-user experience.